The revenue-first framework to evaluate tests without getting hoodwinked by false winners and phantom uplift.

Bonjour Millionaire,

Remember when you learned about A/B testing as a baby marketer, and started suggesting it in meetings anytime you wanted to sound smart?

“Let’s test it.”

“Have we tested it?”

“Feels like a test.”

Just me?

Now that I’m grown (and old, so very old) I’ve learned that unfortunately our A/B tests are often lying to us.

Because the metrics we use to read them – conversion rate and AOV – can’t reliably predict or solve for revenue.

And revenue is the whole point, as we know.

You may have suspected something was off, when you’ve:

💣: Run multiple A/B tests that ended in the “no decision” zone.

1 variation boosts conversion and tanks AOV, the other does the opposite. Great! Now what?

💣: Shipped a “winning” test that actually ended up losing money.

You trusted the data, but the data failed you.

I’m not here to lament. I’m bringing a solution.

A new, fancy metric is available to us.

It knows where CVR & AOV mislead your teams.

And, it measures and predicts real revenue impact.

It’s called… RevenueIQ.

And you don’t need to be a data scientist to understand RevenueIQ results and take action.

AB Tasty breaks it all down in this handy article, which I’ve read and applied into my work.

If you really want to nerd out, read the ebook. (Click this to impress me.)

Otherwise, continue on for the cold, hard truth about A/B testing – and how to start running smarter, easier tests that make more $$$$.

RIGHT NOW, A/B TESTING IS MORE LIKE MAY/BE TESTING

When we talk about CRO, what we’re really trying to get at is more revenue. Obviously.

CVR & AOV rarely tell the full revenue story.

They move totally independently, which is why 1 often goes up while the other goes down.

Hence, CRO’s dirty little secret: most tools evaluate AOV & CVR separately.

This is why the same exact test looks “fine” in 1 dashboard, “bad” in another, and “f*cking confusing” everywhere else.

And when you can’t reliably model actual revenue…you’re going to default to optimizing for conversions.

This makes traditional A/B testing incomplete at best.

And outright misleading at worst.

I’ll explain what we can do about it.

THE PROBLEM WITH CVR AND AOV

CVR and AOV don’t play nice with each other.

CVR tells you how many people converted.

It does not tell you how much they spent.

AOV tells you how much they spent.

It does not tell you how many people converted.

We need both, because a higher conversion rate doesn’t guarantee more revenue.

Would you rather have 5 customers spend $500 or 50 customers spend $5?

(^Hardest math of this article, promise. Unless you like hard math.)

But, when we try to bring in AOV, things get even trickier.

It’s easily skewed by high-value orders and other outliers.

The customer who drops $10K on body wash, bless her, can dominate the average.

And even without these (dream) customers, ecom order values are naturally lopsided.

Most people buy something small. But a consistent minority buy a lot more. Those big orders stretch the AOV to the right.

Your reporting gets screwed. Everyone’s confused.

And often, when you do have a “winner” with traditional A/B testing, the math isn’t exactly mathing.

All you know is 1 variation is SUPPOSED to perform “better.” But even huge samples swing around for no reason.

And, with no clear view of the true revenue impact, you’re still making massive decisions on vibes.

Imprecise metrics create imprecise A/B test results.

We need to make ACTUAL revenue we can PROVE. And, more importantly, REPEAT.

Here’s how.

BREAKING NEWS: TO MAKE MORE REVENUE, YOUR TESTS MUST BE REVENUE-CENTRIC.

To recap: traditional testing tools can’t cleanly model out the revenue impact of a test.

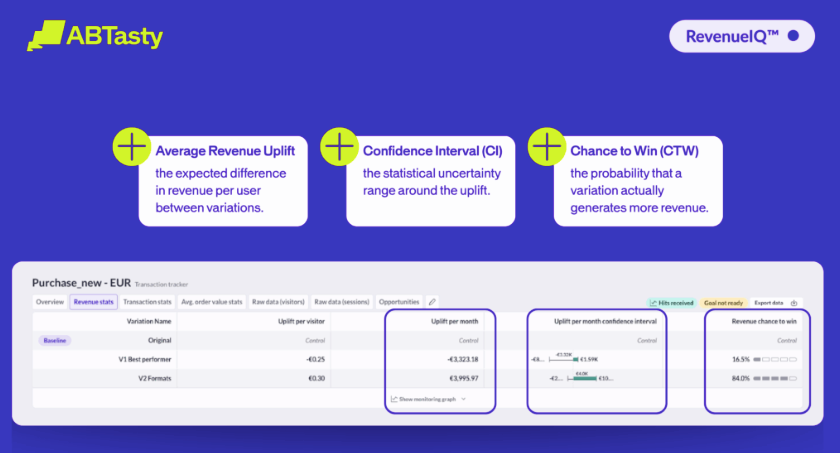

RevenueIQ fixes this by evaluating your tests using revenue per visitor, which captures CVR and AOV together.

(This is important to know, whether you use RevenueIQ or not, for the record.)

If you want to know exactly how RevenueIQ does this, congrats on your GMAT score, please read the ebook. And if you want the TLDR: this article is shockingly digestible.

Folding in this metric to your calculations gives you:

- Actual revenue impact (not a guess)

- Chance-to-win

- Best case/worst case scenarios

- Monthly revenue projections

Instead of a “projected winner,” for example, RevenueIQ delivers a CLEAR winner via Average Revenue Uplift (ARU) per visitor per month.

In short: clarity. With a 95.9% chance to beat the control.

ARU kidding me?

Plus, because RPV combines 2 metrics instead of analyzing them separately, you get results faster, too.

Revenue-first testing gives us:

🌹: Simplified reporting + forecasting

🌹: Clear winners you can defend

🌹: Fewer misleading A/B tests

🌹: More strategic experimentation

🌹: Insights & predictions finance will respect

🌹: A roadmap built on hard data instead of crossed fingers

ARU SMARTER THAN THE AVERAGE MARKETER?

I’ll answer that: yes, you are U R.

Because now, you’re solving for the thing that actually matters: revenue, not whichever metric behaved this week.

Particularly when you have the help of an all-in-1 platform that’s user-friendly and accessible for both marketers and product teams, a la RevenueIQ.

This is how to combine the qualitative finesse of a marketer with the quantitative rigor of a data scientist.

This is where I’d normally put a jaw-dropping case study of a brand’s success with RevenueIQ. But, data-driven company that it is, AB Tasty wanted to wait a few months, so results are conclusive. THIS TRACKS. I respect it. I heard some things anecdotally, though, and I’m impressed! Huge logo that I shop is using this and it’s going well. Oh no, I’ve said too much.

Actually, let me say 1 more thing: if you schedule a walkthrough with their team, AND mention I sent you, you can unlock a 2 week free trial of RevenueIQ & ABTasty.

If you qualify, ABTasty will put on the white gloves. I asked especially.

Peruse the quick article of your new revenue-first model, or go even deeper on the mechanics with the ebook.

Fix your metrics, make more money.

ARU ready? Yes, you are.

Yours,

Ari